⚡️Faster by Default

Everyone benefits when platforms compete on performance.

Wordpress has been making a concerted effort to improve their default performance, and we’re starting to see some very nice returns as a result. Wordpress just released version 6.2, and the analysis they’ve done is showing a big boost for performance—a 17-23% improvement in server-side performance for their block themes, and 3-5% for classic themes.

It’s also been interesting to peek around at the dashboards they have set up to see how they’re monitoring these changes and the different measurements they’re looking at.

Of course they’re not the only ones.

Shopify has been doing great work around improving their default performance, and they’ve been blogging about some of their wins. Netlify, Vercel, Cloudflare Pages and the like tend to position themselves fairly heavily based on the promised performance benefits. Competing on performance is a great thing for everyone on the web.

A few years back, Ilya Grigorik gave a talk about the head (top 100 sites), torso (next 10,000 sites), and tail (the rest) of the web. That framing has stuck with me ever since. The way we improve performance differs a bit between those three segments, because the organizations that find themselves in the head (for example) of the web tend to have very different structures, resources and needs than organizations in the tail.

Focusing on making the underlying platforms that power a large percentage of the web is one of the fastest ways to raise the tide for all.

🖼️ Largest Contentful Paint and Bits Per Pixel

Chrome is constantly refining the way their report their core web vital metrics, and Largest Contentful Paint in particular has seen numerous iterations. The latest tweak is that, starting in Chrome 112, Largest Contentful Paint is staring to ignore “low-entropy” images.

In their continuous focus on how to make Largest Contentful Paint (LCP) a better proxy for “most important bit of content”, Chrome is looking to weed out large images that have no content—things like placeholder images, solid color background images, etc. Some of those are reported as the largest bit of content today despite the fact that they’re not really “content” images.

The fact that the entropy has never factored in has also left the door open for gaming the metric with dubious techniques.

In an attempt to combat this, they’re now going to look at the entropy of an image (measured in bits per pixel) to determine how “contentful” it really is. Anything with virtually no content (< 0.05 bpp) will not count towards LCP. If you want to play around a bit to see how that bpp is calculated, I built a little demo page where you can upload an image, play around with it’s width and see how the bpp is calculated. Stoyan also built a bookmarklet that you can use to see the bpp of images in the wild.

In most situations, this change is unlikely to impact your LCP, but I have seen some lazy-loading techniques that rely on invisible data-uri’s as placeholders—sites using those sorts of approaches are likely to see a dip in their LCP metric.

⚖️ Learn about Load Balancing

There’s an art in being able to explain concepts in a way that make them feel approachable. There’s a fantastic post from Sam Rose last week that demonstrates this perfectly.

Sam wrote an explainer of different load balancing algorithms, and he does it in a very approachable manner. Each algorithm is explained with a series of animations so you can help to understand exactly how it works, and what limits there are for each.

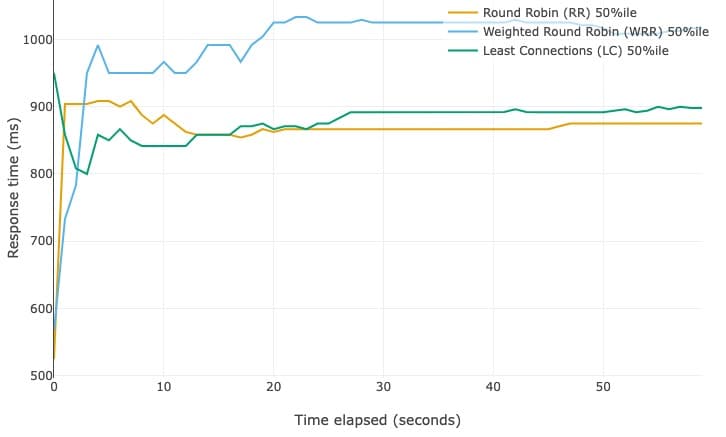

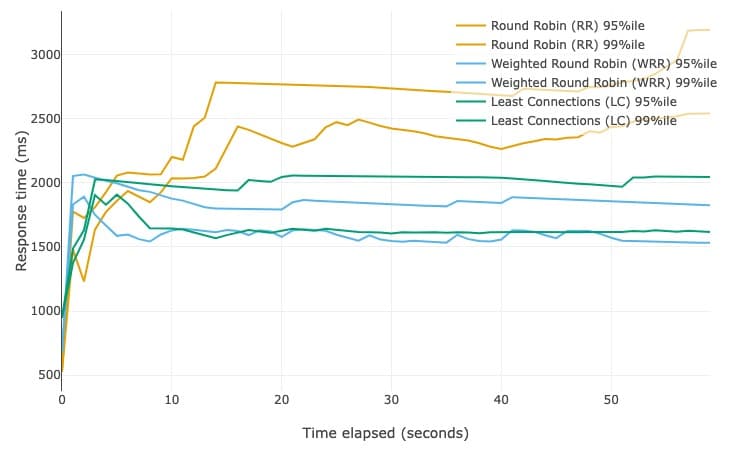

It’s also a great cautionary tail for why you need to focus on the long-tail, not just the median. In the simulation data he provides, the Round Robin algorithm (the simplest approach to load balancing) out-performs the other approaches at the median.

But when you zoom out and look at the 95% and 99% percentile, you can see that the Round Robin algorithm suddenly becomes the worst performer.

Now, you may decide that some trade-off in the long-tail is ok, but a carefully considered trade-off is always better than one you don’t know you’re making and you often only see that if you look beyond the median.

Take care,

Tim